Overall, our experiments demonstrate that properly performed pretraining significantly increases the performance of tabular DL models, which often leads to their superiority over GBDTs.

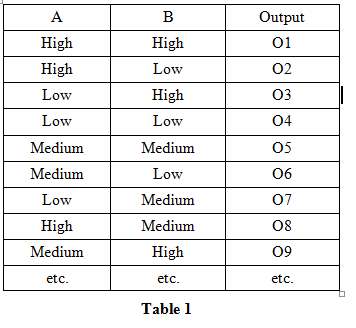

Among our findings, we show that using the object target labels during the pretraining stage is beneficial for the downstream performance and advocate several target-aware pretraining objectives. In this work, we aim to identify the best practices to pretrain tabular DL models that can be universally applied to different datasets and architectures. The recent literature on tabular DL proposes several deep architectures reported to be superior to traditional shallow models like Gradient Boosted Decision Trees. For tabular problems, several pretraining methods were proposed, but it is not entirely clear if pretraining provides consistent noticeable improvements and what method should be used, since the methods are often not compared to each other or comparison is limited to the simplest MLP architectures. The necessity of deep learning for tabular data is still an unanswered question addressed by a large number of research efforts. The output would be a single float value because of its a regression task. Deep learning has proved to be groundbreaking in a lot of domains like Computer Vision, Natural Language Processing, Signal Processing, etc. Unlike GBDT, deep models can additionally benefit from pretraining, which is a workhorse of DL for vision and NLP. The goal is to define a model based on the number of continuous columns + the number of categorical columns and their embeddings.

Download a PDF of the paper titled Revisiting Pretraining Objectives for Tabular Deep Learning, by Ivan Rubachev and 3 other authors Download PDF Abstract:Recent deep learning models for tabular data currently compete with the traditional ML models based on decision trees (GBDT).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed